Variance Reduction

General considerations

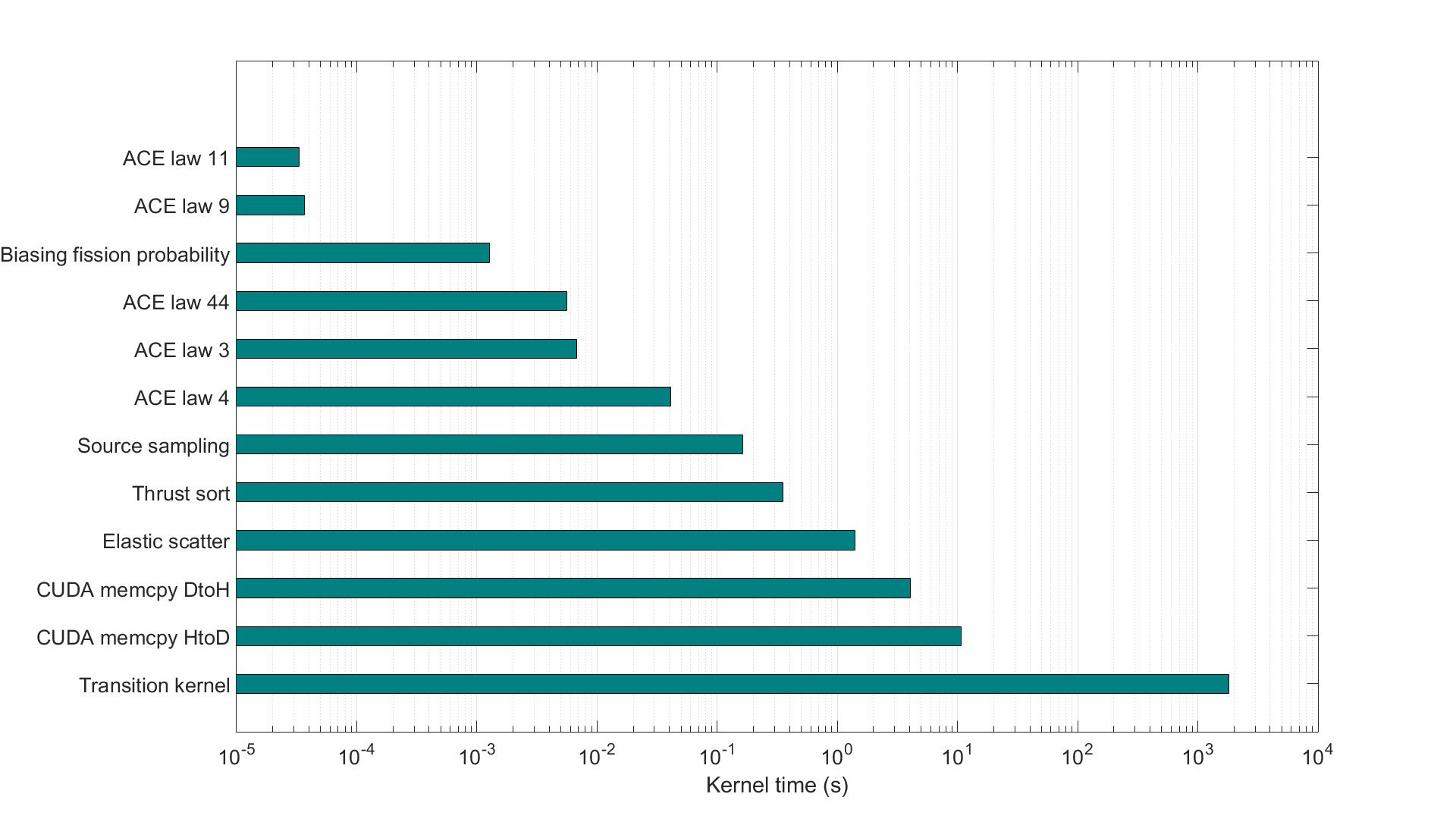

The time dependent tracking of the neutron population in a multiplying, near critical medium is very challenging in terms of Monte Carlo convergence. A naive analog game in most cases would statistically diverge, moreover it will give an underestimate of the power as the very low chance contributions of a high number of fission in certain chains see Fig. 1. Therefore the calculation is performed always keeping a single particle as a sample of the neutron population gaining or loosing weight at interactions. The neutron weight distribution must be kept around the mean for ensuring statistical convergence. The neutrons are followed from time interval to time interval and the population at the interval ends using splitting and Russian roulette while keeping the total population number constant. Having single, non-branching calculations also supports the architecture of the GPU where threads can be set to single neutron chains.

Figure 1.: Analog and non-analog simulation results for time dependent power evolution for a multiplying medium. Analog simulation produces an underestimate of the power

Biased sampling schemes are applied at fission yield, delayed neutron, interaction type sampling with ongoing development regarding path length sampling and angular biasing.

Optimization of the GPU workflow: history based vs. event based simulations

The key idea of GUARDYAN is a massively parallel execution structure making use of advanced programming possibilities available on CUDA enabled GPUs. In order to maximize performance however, the architecture calls for some major deviations from a traditional MC code.

We have established two independent branches of the code, both designed to exploit the parallel capabilities of the GPU, although via quite different strategies. The first branch uses a very straightforward approach, that is the concurrent simulation of particle histories. One working unit is designated to simulate a particle history from birth to death, following the traditional history based structure. This idea exploits the inherent parallelism of MC tracking, i.e. particle histories are independent of each other. On the other branch the code is vectorized, meaning that simultaneously executed operations are expected to be identical. While vectorization of the code would not mean much difficulty in case of deterministic methods, MC simulations are ill-suited for this task by the random nature of the process. Preserving a history-based structure is in this case unfeasible, thus an event-based strategy was implemented. A parallel MC calculation is called event-based when only particles undergoing the same event are simulated concurrently. In this case, one working unit is assigned to calculate the outcome of one event in a particle history. The tracking routine first assigns events (e.g. free-flight, fission, elastic scatter) to particles, creating stacks of particles undergoing the same event, then these stacks are processed separately.

Parallel optimization structures

Currently GUARDYAN runs on a machine containing two Nvidia GeForce GTX 1080 cards, each with 8 GBytes of global memory and 5500 GFLOP/s single precision performance accordingto NBody GPU benchmark. The GTX 1080 cards are based on the Pascal architecture and have 2560 scalar working units (CUDA cores). These cores can launch warps of 32 concurrent threads,resulting in a theoretical maximum of 81920 parallel working units. The optimal number of concurrent threads may of course differ due to memory and arithmetic latency considerations. Thread management is implemented by organizing a desired number of threads into blocks, which are required to execute independently. This also ensures automatic scalability of the program, as blocks of threads can be scheduled on any multiprocessors of the device, yielding faster execution time when more multiprocessors are available. Functions executed in parallel are called kernels in CUDA terminology. Kernels are launched by specifying the number of threads in a block, and the total number of blocks. In general, to choose the number of threads in a block as a multiple of warp size(32) is a good idea, however, CUDA offers an opportunity to maximize kernel performance automatically: calling the cudaOccupancyMaxPotentialBlockSize function for every kernel ensures optimal occupancy in terms of arithmetic intensity and memory latency.

Memory Management

CUDA distinguishes six memory types: register, local, shared, texture, constant and global memory.Registers ensure the fastest memory access and are assigned to each thread. Global, constant and texture memory can be accessed by all threads, while the scope of shared memory is only a block. In exchange, it is much faster. Texture memory is not truly a distinct memory type, it only labels a part of global memory that is bound to texture. Textures are implemented with hardware interpolation, thus they would be ideal for storing cross section data. But due to random memory access patterns inherent in MC simulations, using cached memory is not advised in this case ,thus cross sections are stored in global memory. A severe limitation for MC applications is the sizeof global memory, e.g. in GUARDYAN, nuclear data for one temperature occupies about 6GB ona card with global capacity of 8GB. Memory transactions between GPU (device) and CPU (host) are carried out through reading and writing global memory. As the access of global memory is slow, these transactions can also take a considerable time, and can have a significant impact on overall performance.Notice, that if the simulation structure is changed (e.g. a history-based algorithm is vectorized),register use, global memory reads and host-device communication will show different behavior,also influencing runtime. Thus the performance gain from vectorization will be obscured.

Thread Divergence

When implementing a MC neutron transport code special attention must be paid to choosing the right kernels, as the slowest working thread will determine the efficiency of parallelization. All other threads in a warp must wait for the thread finishing last. Loops, conditional and branching statements lead to thread divergence, an uneven distribution of work-load. This issue is targeted by the vectorization of the code, i.e. the event-based Monte Carlo simulation. In event-based GUARDYAN this is implemented by distinguishing kernel functions for different types of events instead of just one ”big” kernel (as in the history-based GUARDYAN). When calling these kernels,threads of a warp executing the same operations on particles (the same event is simulated) do not branch, whereas in a history-based simulation threads may easily diverge as one particle may be in a transition step while the other scatters or induces fission. Branching statements in a warp are executed serially in CUDA: an if-else statement is executed for all warps in two cycles (both branch is executed one after the other). When the ”if” branch is executed, the threads that do not satisfy the condition (would diverge to the ”else” branch) are flagged and perform a NOP (no operation).This results in the degradation of parallel performance. However, it does not necessarily mean that the vectorized version of the code will execute faster. One reason was given in the previous section, regarding memory management issues. Neither should we neglect that the compiler also does some optimization to reduce the penalty due to thread divergence [13], among these are wellknown tools like warp-voting and predication, but CUDA uses optimization tools that are hidden from the programmer.

Event based and History based approach comparison: Test Case 1

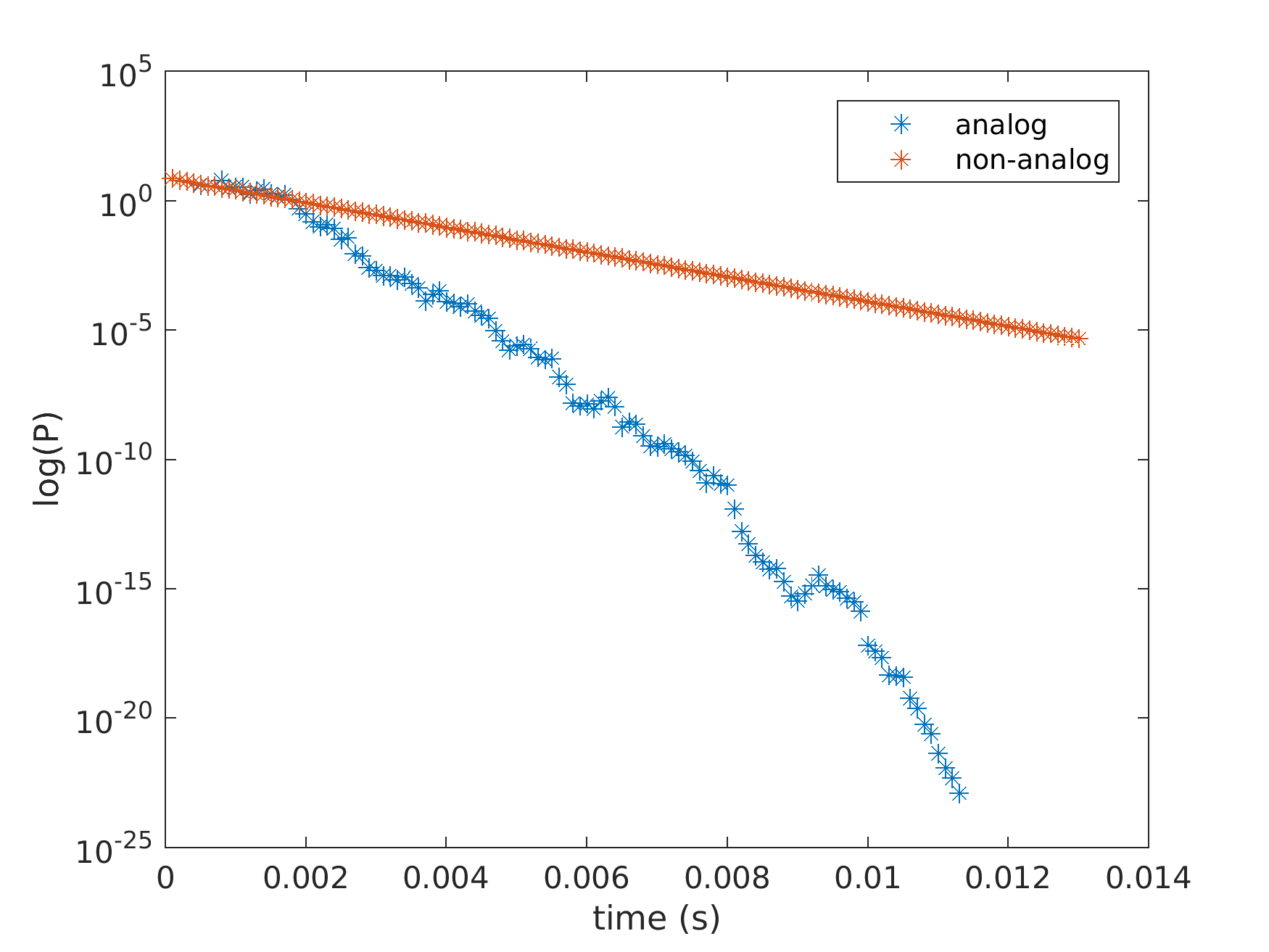

GUARDYAN has been recently validated against MCNP6 in a simplified setup assuming a monoenergetic neutron source inside a homogeneous sphere. 4 106 neutrons were launched at energies 0.01eV , 1eV , 1keV , 1MeV and 18MeV , and tracked until either leaking out of the sphere or exceeding time boundary. The simulation was carried out for 412 isotopes and was used for validation of the code comparing the spectrum of leaking neutrons to MCNP6 results. Cross section library ENDF/B-VII.1 was used assuming temperature of 293:6K. Regarding our investigation of event-based tracking, wall-time was measured for both history-based (TH) and event-based (TE) simulations. In Fig. 2, histograms of simulation speedup are plotted for all starting energies. Speedup is simply defined by

Speedup= TE/TH

i.e. the ratio of wall-times. Fig. 2 shows that vectorization of the code resulted in faster execution time in most cases. Typical speedup was around 1:5-2, but longer simulation time was observed mainly when starting energy is below 1MeV .

Figure 2.: Speedup figures for the simple spherical geometry

The efficiency loss was experienced in case of isotopes with high probability for fission around the starting energy. When the starting energy is low, neutrons released in fission take on much higher velocity than starters, thus leaking out of the system very fast. As a result, significant part of computational effort was spent on a few neutrons bouncing around in the system. Population drop caused vectorization gain to be cancelled due to the computational overhead of event-based tracking (particles need to be sorted by event type). On higher starting energies, no considerable speedup was observed in case of elements with low atomic numbers, the improvement from vectorization was more expressed when heavy elements were present. This is due to that the outgoing energy and angle of a neutron scattered on a light isotope are derived by simple laws of collision mechanics, while more complicated energy laws are applied when heavier isotopes are present . In GUARDYAN beside elastic scatter only ACE law 3 (inelastic discrete-level scattering) was used in the former case, and ACE law 4 (and 44) was additionally used in the latter. ACE law 4 represents a continuous tabular distribution, the outgoing energy is given as a probability distribution for every incoming energy. This sampling procedure takes considerably more time, contributing to thread divergence, and resulting in substantial efficiency boost for event-based tracking.

Event based and History based approach comparison, Test Case 2: simple subcritical assembly

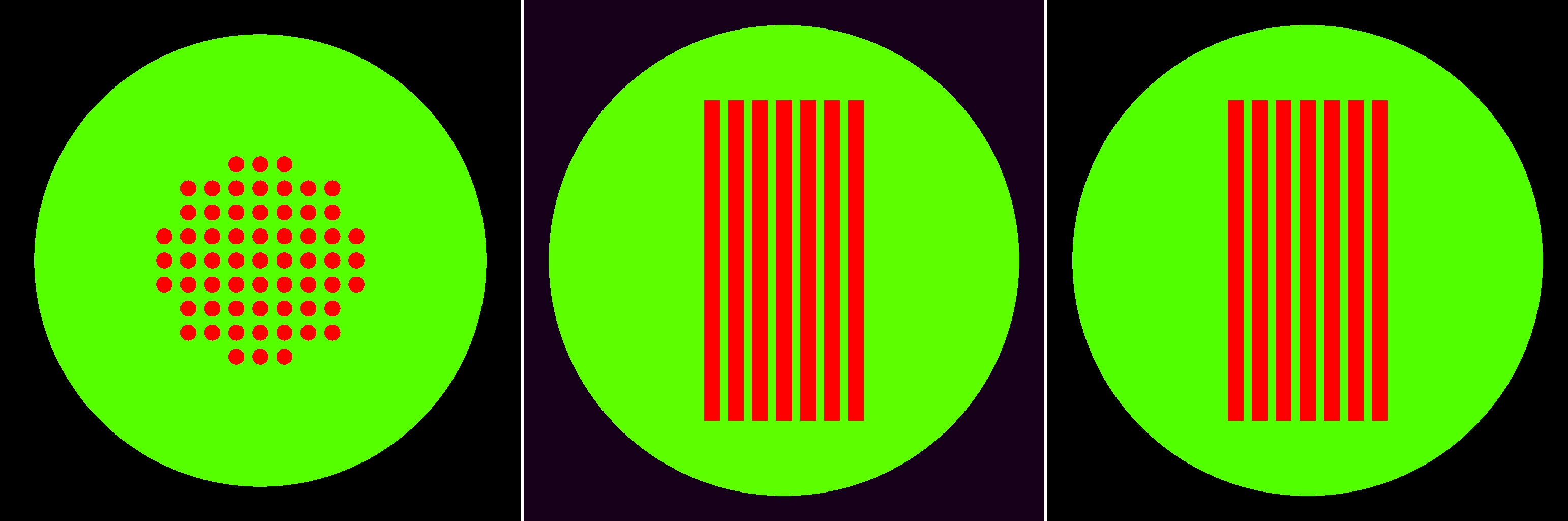

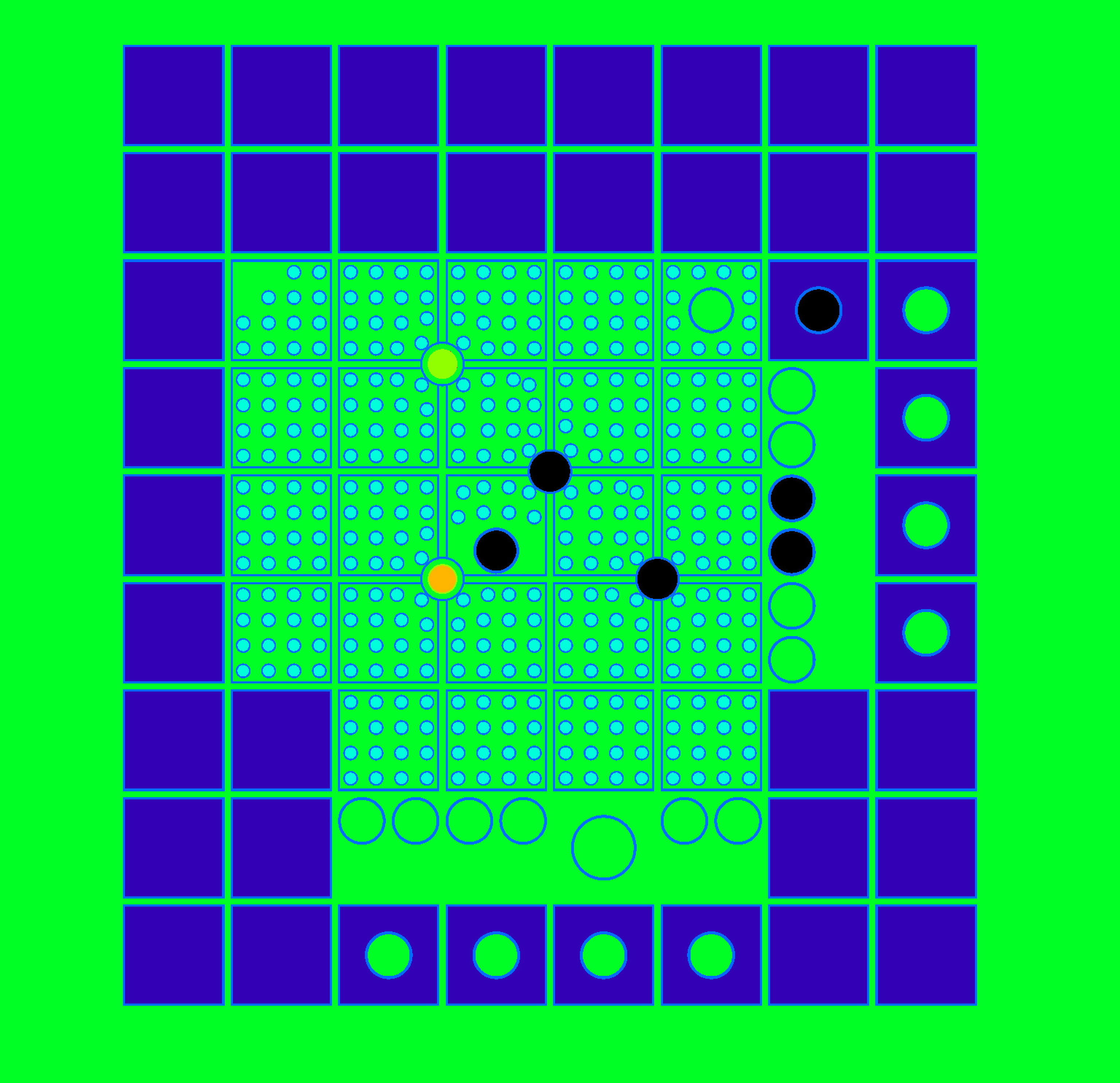

The second test case was the verification subcritical model (see Modelling and Verification section) was a 30 cm radius water sphere with 61 UO2 cylindrical fuel rods of 40 cm length, 1 cm radius spaced 1 cm apart from each other see Fig. 1.. Uranium was 4.7% enriched and fuel rod density was taken as 10.5g/cm3.

Figure 3.: Geometry of the subcritical verification model

Table 1 shows execution times measured during the simulation of neutron transport in the inhomogeneous sample problem. Wall-times of history-based and event-based versions show no significant difference, the vectorized code performed slightly better. It must be noted that although speedups are also shown relative to MCNP runtime, these should not be considered conclusive, as the MCNP simulation ran on a single core, also, GUARDYAN is highly underoptimized. Nevertheless a factor of 6 was observed in performance over the single core MCNP simulation.

| MCNP6 | GUARDYAN history-based | GUARDYAN event-based | |

| Wall-time (min) | 39.9 | 6.73 | 6.22 |

| Speedup | — | 5.93 | 6.41 |

Table I.: Speedup factors for the subcritical assembly

Event based and History based approach comparison, Test Case 3: BME Training Reactor

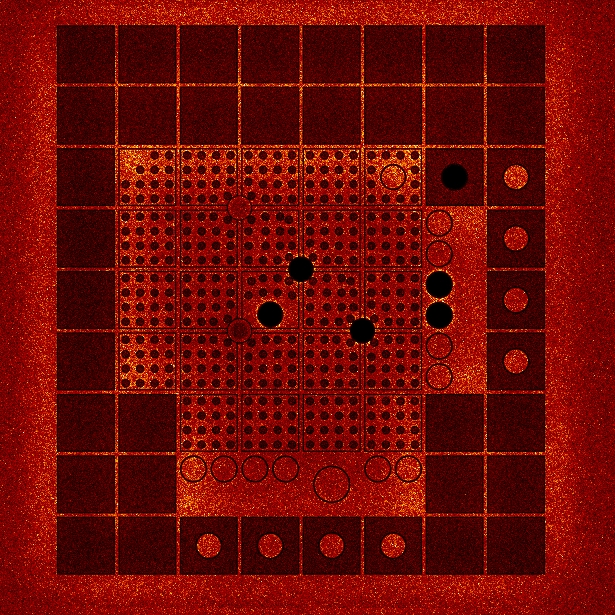

GUARDYAN is capable of simulating neutron transport in complex geometries, but is has yet to be further developed in order to provide quantitative results. The event-based version was tested on the geometry of the training reactor (BME Training Reactor) at Budapest University of Technology and Economics, see Fig. 4.

BME Training Reactor Geometry

Neutron density distribution in the BME Training reactor calculated by GUARDYAN

Figure 4.: BME Training Reactor geometry and neutron density

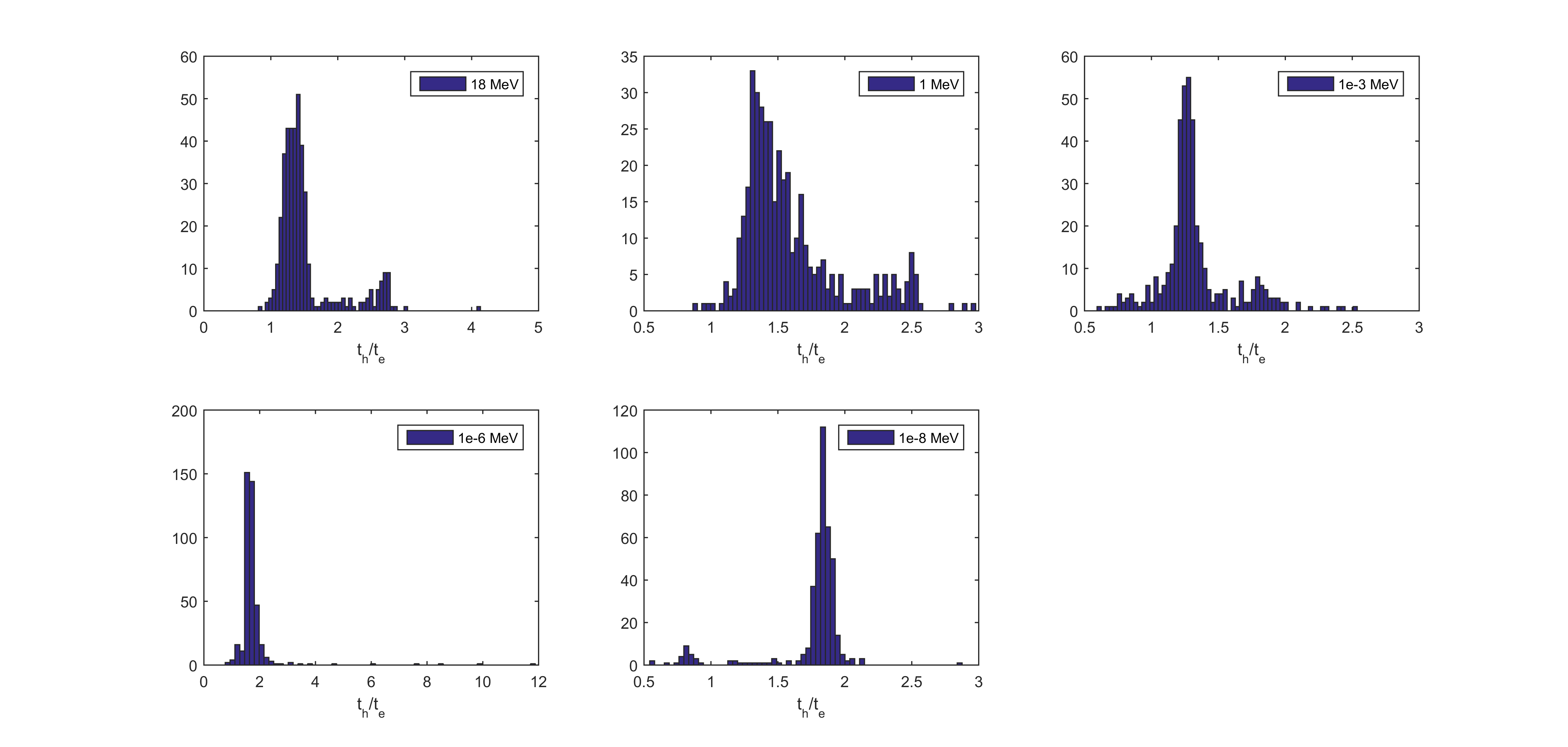

We experienced, that the vectorized code ran 1.5x slower than the history-based algorithm. To better understand the underlying reasons we looked into the kernel execution times. In case of the event based version of GUARDYAN, every energy law was implemented in a separate kernel, thus an application profiling tool is able to reveal which task consumed most resources. Inspecting the profile shown in Fig. 5, several conclusions can be made:

- The main part of the execution time is due to calling the ”transition kernel”. This function transports a particle to the next collision site, and performs the selection of reaction type for that particle. Long calculation time is most likely caused by theWoodcock method used for path length selection (a phenomenon termed the heavy absorber problem) and slow energy grid search algorithms implemented in GUARDYAN. A possible solution for the heavy absorber problem may be solved according to our recent investigation of biased Woodcock algorithms

- Memory transaction costs are much greater than computational costs of simulating different reactions. The ”CUDA memcpy DtoH” and ”CUDA memcpy HtoD” tasks stand for the communication between host and device, taking up more simulation time than simulating elastic scatter and ACE laws.

- The ”Thrust sort” kernel includes all computational overhead that is associated with event-based tracking. Note, that sorting is done two orders of magnitudes faster than memory transactions.

Fig. 5 indicates that history based tracking may be more effective because most of the calculation time is due to calling one kernel (called ”transition kernel”) which is applied to all particles before every collision. In order to execute the simulation of any type of reaction, the event based version must wait for the transition step to end for all particles. On the other hand, the history-based simulation can go on unsynchronized, i.e. threads may diverge (one may execute a transition step while the other simulates a collision), but threads do not need to wait for others to proceed. By optimization of the transition step, we may reach a different conclusion.

Attachments (7)

- NRDI.jpg (34.8 KB ) - added by 8 years ago.

- TDMCC_varP_analog_vs_nonanalog.png (61.8 KB ) - added by 8 years ago.

- event_vs_history_times_new.m.png (106.7 KB ) - added by 8 years ago.

- UOH2O_geometry.png (246.7 KB ) - added by 8 years ago.

- BME_OR_1e-6s_n2e26_neutrondensity_Guardyan_v3.jpg (528.0 KB ) - added by 8 years ago.

- BMEOR_v3.jpg (1.2 MB ) - added by 8 years ago.

- bmeor_profile.png (74.6 KB ) - added by 8 years ago.